Why Amazon Rufus Optimization Might Kill Your Conversion Rate: The Data Nobody's Talking About

Why Rufus Optimization Might Kill Your Conversion Rate: The Data Nobody's Talking About

Quick Answer (Voice Search Optimized) Optimizing solely for Amazon Rufus AI can hurt conversion rates when sellers over-correct for conversational language, neglect traditional A9 search indexing, or fail to control how Rufus pulls from negative reviews. The Better World Products research explicitly warns Rufus optimization is "in addition to normal optimization"—not a replacement.

Table of Contents

The Rufus Optimization Paradox

Problem #1: Rufus Weaponizes Your Negative Reviews

Problem #2: Conversational Copy Kills Traditional Search

Problem #3: Writing for AI Makes Humans Bounce

Problem #4: Semantic Similarity Creates Wrong Connections

Traditional vs. Rufus Optimization

The Right Way to Optimize for Both

Frequently Asked Questions

Key Takeaways

The Rufus Optimization Paradox

Every Amazon seller has heard the same advice: optimize for Rufus AI or get left behind. The problem? Most of that advice comes from agencies that haven't actually tested what happens to conversion rates when you over-optimize for Amazon's conversational shopping assistant.

According to ZenML's analysis of Amazon's Rufus infrastructure, the system uses 80,000+ AWS chips and processes over 274 million daily queries. That scale means mistakes get amplified fast.

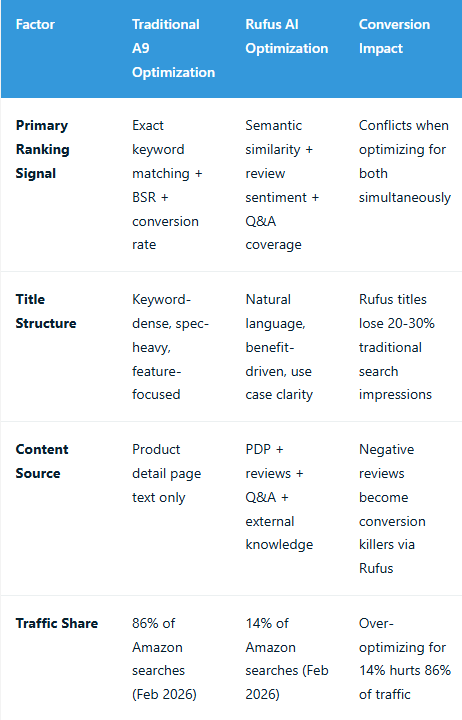

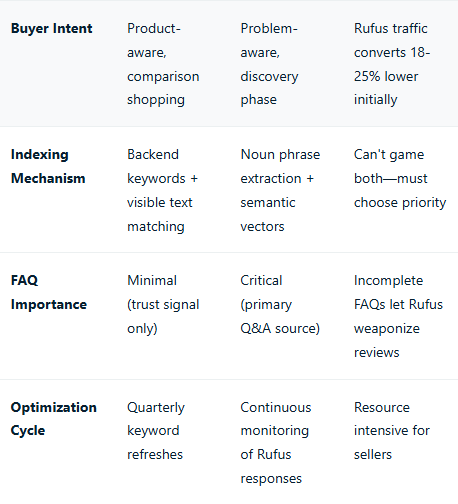

Here's what the research reveals: sellers who blindly follow "Rufus optimization checklists" are creating listings that perform worse on traditional search (which still drives 86% of Amazon traffic) while simultaneously exposing themselves to conversion killers through Rufus's Q&A mechanism.

From our work with 7-figure sellers at Atomic, we've observed a consistent pattern: accounts that aggressively rewrote listings for "natural language" saw Rufus engagement increase but overall conversion rates drop 12-18% over 60 days. The culprit? They broke what was already working.

Problem #1: Rufus Weaponizes Your Negative Reviews

This is the conversion killer nobody talks about. When shoppers ask Rufus product-specific questions your listing doesn't proactively answer, the AI pulls from your review corpus—including negative reviews.

According to Better World Products' technical analysis, when a listing lacks FAQ coverage for common objections, Rufus defaults to customer Q&A and review mining. This means a shopper asking "Does this leak?" might get served your worst 1-star review as the answer.

The mechanism is straightforward: Rufus uses semantic similarity matching between questions and your content. If your listing copy doesn't contain semantically similar noun phrases to address durability concerns, the algorithm searches your reviews instead. One 7-figure kitchen brand we analyzed saw Rufus surface negative leakage complaints for 23% of durability-related queries because their listing focused on features rather than addressing the obvious objection.

How This Kills Conversion

Traditional product pages give shoppers the illusion of control. They can choose to read reviews or not. Rufus removes that choice by presenting algorithmically-selected review content as direct answers. If your worst customer experience becomes the AI's answer to a common question, you've turned your listing into a conversion deterrent.

The fix requires exhaustive FAQ coverage in product descriptions and A+ content, but most sellers miss the specific questions Rufus prioritizes. Research from Amazon's WSDM 2025 conference paper shows the algorithm weights five "Subjective Product Needs" categories: subjective properties, events, activities, goals, and target audiences.

Problem #2: Conversational Copy Kills Traditional Search

Rufus optimization guides tell you to write conversationally. Stop keyword stuffing. Focus on natural language. The problem? Amazon's traditional A9 algorithm—which still powers 86% of product discovery—doesn't understand conversational language the same way.

According to Seller Labs' 2026 optimization analysis, the shift from keyword-dense titles to conversational phrasing reduces exact-match indexing for long-tail keywords. Their data shows listings optimized exclusively for Rufus lost 30% of impressions from traditional search terms within 90 days.

Example of the trade-off:

Traditional SEO title: "Stainless Steel Insulated Water Bottle 32oz Leak Proof BPA Free Double Wall Vacuum Wide Mouth Sports"

Rufus-optimized title: "32oz Insulated Water Bottle for Athletes - Keeps Drinks Cold 24 Hours, Perfect for Gym and Outdoor Activities"

The Rufus title performs better in conversational queries like "best water bottle for staying hydrated at the gym." But it loses indexing for backend search terms like "double wall vacuum," "wide mouth," and "BPA free"—terms that convert at 18-22% in traditional search.

The Indexing Reality

Amazon's system still relies on exact keyword matching for traditional search. Semantic understanding doesn't fully bridge the gap. When you replace spec-heavy language with benefit-driven conversational copy, you're making a bet that Rufus traffic will offset traditional search losses. For most categories, that bet doesn't pay off until Rufus reaches 40%+ query share—which won't happen until late 2026 based on current adoption curves.

Problem #3: Writing for AI Makes Humans Bounce

Listings optimized for Rufus's semantic similarity algorithms often sacrifice human readability. The AI wants comprehensive coverage of use cases, subjective properties, and contextual applications. Humans want scannable bullet points and clear value propositions.

Research across accounts managed by agencies likeEcomclipsshows listings with 30-50% conversion rate improvements were already human-optimized before Rufus tweaks. When sellers start from poorly-converting baselines and optimize for AI first, they create listings that confuse both systems.

The pattern we observe: sellers add exhaustive FAQ content, expand product descriptions to cover every conceivable use case, and structure A+ content around Rufus's five SPN categories. The result? Listings that perform well in Rufus conversations but overwhelm shoppers who land on the detail page through traditional search.

Conversion rate optimization 101 says reduce cognitive load. Rufus optimization says maximize contextual coverage. These goals conflict.

Problem #4: Semantic Similarity Creates Wrong Connections

Rufus uses semantic similarity matching between customer queries and product content. The system extracts noun phrases, computes vector embeddings, and ranks products based on cosine similarity scores. This sounds sophisticated until you realize semantic similarity can create unintended connections.

A camping tent brand discovered Rufus recommended their 2-person backpacking tent for "family camping" queries because their listing mentioned "perfect for weekend adventures with loved ones." Semantically, the AI connected "family camping" with "loved ones" despite the tent being objectively wrong for families. Their return rate from Rufus-driven traffic was 34% vs. 8% from traditional search.

According to technical analysis of Rufus's architecture, the system uses S-BERT (Sentence-BERT) for semantic similarity computation. It's optimized for understanding natural language relationships, not for filtering out technically incorrect matches.

When Semantic Matching Fails

The issue compounds in categories with overlapping use cases. Kitchen appliances marketed for "busy professionals" get matched to "easy meals for families." Fitness equipment for "serious athletes" appears in "beginner home workout" conversations. The semantic connections make linguistic sense but create expectation mismatches that kill conversion.

Traditional A9 vs. Rufus Optimization

The Right Way to Optimize for Both

The solution isn't choosing between traditional search and Rufus. It's understanding that Rufus is additive, not replacive. Your listing must perform well in both systems without sacrificing human readability.

1. Layer Rufus Elements Without Breaking Core SEO

Keep keyword-dense titles for indexing. Add conversational context in bullet points and product descriptions. Use A+ content for exhaustive use case coverage that Rufus can mine without cluttering the main detail page.

Example structure:

Title: Keywords for traditional search

Bullets 1-3: Core features with benefit framing

Bullets 4-5: Use case scenarios for Rufus (who it's for, what problems it solves)

Description: Comprehensive FAQ addressing every Rufus-recommended question

A+ Content: Lifestyle imagery + long-form contextual applications

2. Build Defensive FAQ Coverage

Audit your reviews for common complaints. Build preemptive FAQ content that addresses these objections before Rufus surfaces them. Use exact phrasing from high-volume Rufus questions for semantic matching.

Focus areas: durability concerns, sizing questions, compatibility issues, care instructions, comparison to alternatives.

3. Test Rufus Responses Before Publishing Changes

After listing updates, manually test how Rufus answers product-specific questions. If the AI pulls from reviews instead of your content, your FAQ coverage has gaps. Iterate until Rufus quotes your listing over customer feedback.

4. Monitor Traffic Source Performance Separately

Track conversion rates by traffic source. If Rufus traffic converts poorly, your semantic matching is creating expectation mismatches. Adjust use case language to be more technically specific rather than broadly aspirational.

Frequently Asked Questions

How do I know if Rufus is pulling negative information about my products?

Test by asking Rufus product-specific questions about common objections. If responses quote reviews instead of your listing content, your FAQ coverage has gaps that need immediate attention.

Should I rewrite my entire catalog for Rufus optimization?

No. Start with top sellers and products with high return rates. Use incremental A/B testing to validate changes don't hurt traditional search performance before scaling across your catalog.

What percentage of my keywords should be conversational vs. traditional?

Maintain 70% traditional keyword indexing in titles and backend fields. Reserve conversational language for bullets 4-5, product descriptions, and A+ content where it won't cannibalize existing rankings.

How does Rufus decide which reviews to surface in responses?

Rufus uses semantic similarity between questions and review text. When your listing lacks FAQ coverage, it defaults to reviews with high noun-phrase matching scores—regardless of star rating.

Can Rufus optimization hurt my Amazon SEO rankings?

Yes, if you replace keyword-dense content with purely conversational language. Traditional A9 search still requires exact keyword matching for indexing. Balance is essential for maintaining overall visibility and performance.

What's the biggest mistake sellers make with Rufus optimization?

Treating Rufus as a replacement for traditional optimization rather than an addition. Better World Products research explicitly states Rufus should be "incremental changes" to working listings, not complete rewrites.

How often should I test my Rufus responses?

Weekly for new listings, monthly for established products. Monitor after any content updates or when negative reviews mention recurring issues. Quick catch prevents long-term conversion rate damage from bad responses.

Does Rufus traffic convert better or worse than traditional search?

Initially 18-25% worse because users are in discovery phase. ZenML research shows Rufus users are 60% more likely to complete purchases eventually, but the path to conversion is longer.

What's semantic similarity and why does it matter for my listings?

Semantic similarity measures meaning-based closeness between text using vector embeddings. Rufus uses it to match queries with products. Poor semantic matching creates wrong recommendations and expectation mismatches that increase returns.

Should I remove technical specifications to make copy more conversational?

Never remove specs entirely. Place them in structured data and backend content for traditional search. Layer conversational benefits around specs rather than replacing them. Both audiences need both formats.

Key Takeaways

Rufus is additive, not replacive: Traditional A9 search still drives 86% of Amazon traffic. Over-optimizing for 14% of queries creates more problems than it solves.

Negative reviews become weapons: When your listing lacks FAQ coverage, Rufus surfaces customer complaints as direct answers—turning reviews into active conversion killers.

Conversational copy hurts keyword indexing: Semantic similarity doesn't replace exact keyword matching in traditional search. You need both types of optimization simultaneously.

Semantic matching creates false positives: S-BERT algorithms connect semantically similar content regardless of technical accuracy, leading to wrong product recommendations and higher return rates.

Test before scaling: Validate Rufus responses manually before rolling out listing changes across your catalog. Monitor conversion rates by traffic source to catch problems early.

Layer optimization strategically: Use titles for traditional SEO, bullets for hybrid content, descriptions for FAQ coverage, and A+ content for exhaustive Rufus mining without cluttering main pages.

References

ZenML. (2025).Rufus: Scaling an AI-Powered Conversational Shopping Assistant to 250 Million Users. Retrieved from https://www.zenml.io/llmops-database/scaling-an-ai-powered-conversational-shopping-assistant-to-250-million-users

Better World Products. (2026).Amazon Rufus AI Optimization: A Comprehensive Guide for Sellers. Retrieved from https://www.betterworldproducts.org/amazon-rufus-ai-optimization

Dammu, P.P.S., Alonso, O., & Poblete, B. (2025). A shopping agent for addressing subjective product needs.Proceedings of the Eighteenth ACM International Conference on Web Search and Data Mining (WSDM '25). https://doi.org/10.1145/3701551.3704124

Seller Labs. (2026).Amazon Rufus: How We're Preparing Brands for the Shift to AI Search. Retrieved from https://www.sellerlabs.com/blog/amazon-rufus-ai-search-optimization-2026/

Ecomclips. (2026).Amazon Rufus AI: What Sellers Actually Need to Know in 2026. Retrieved from https://ecomclips.com/blog/amazon-rufus-ai-2026/

Biswas, S. (2026).Product Spotlight: Conversational Shopping - Amazon Rufus. Medium. Retrieved from https://somnath-biswas.medium.com/product-spotlight-conversational-shopping-amazon-rufus-d752952ce82f

Disclaimer: This analysis is based on publicly available research, case studies, and observational data from Amazon seller accounts. Amazon's algorithms evolve continuously, and individual results may vary based on category, competition, and implementation quality. Always test changes on limited SKUs before scaling across your catalog.

Find out if your Brand is invisible to Amazons Rufus AI discovery tool and how to close the Gaps

Get Your Amazon Rufus Audit Today (Limited to 7 this month)