Fashion Sellers’ Rufus Playbook: Visual Search + Style Recommendation Optimization

Fashion Sellers' Rufus Playbook: What Amazon's Visual AI Actually Reads in Your Apparel Listings

Quick Answer Amazon Rufus processes fashion listings through two parallel systems: text-based semantic matching for occasion, fit, and audience signals, and Amazon Rekognition for visual attribute extraction from images. Fashion sellers who optimize only copy — ignoring what their product images actually communicate to computer vision — are leaving a significant portion of Rufus visibility on the table.

Table of Contents

Why Fashion Is the Hardest Category for Rufus to Get Right

How Amazon's Visual Recognition Stack Processes Apparel Images

The 5 Subjective Product Needs Amazon Uses to Rank Fashion Listings

Occasion Matching vs. Keyword Matching: A Critical Distinction

Image Optimization That Changes What Rufus Recommends

Fashion Listing Optimization: Old Approach vs. Rufus-Aware Approach

The Gender Mismatch Problem and What to Do About It

FAQs

Key Takeaways

References

Why Fashion Is the Hardest Category for Rufus to Get Right

A customer searching for "a dress that makes me feel confident at a fall wedding" is expressing something that exists almost entirely outside traditional catalog data. There is no attribute field for "makes me feel confident." There is no keyword that maps cleanly to the subjective experience of wearing a garment at a specific occasion in a specific season.

This is the core challenge Amazon's Rufus team has been working to solve — and published research gives us a clear window into how far they've gotten. According to research presented at the 2025 ACM International Conference on Web Search and Data Mining (WSDM '25), Amazon built a specialized agent specifically to address what they call "Subjective Product Needs" — the class of queries where customers express personal, contextual, or emotional requirements rather than technical specifications.

Fashion and apparel are among the most densely subjective categories on the platform. The same dress might be "perfect for a beach wedding" or "too casual for a formal dinner" — not because its attributes changed, but because the occasion context changed. Most fashion listings are still written as if A9 keyword matching is the only game in town. That creates a gap competitors haven't yet noticed.

As we covered in our breakdown of how Cosmo processes backend structured fields, the AI systems reading your listing are doing fundamentally different work than traditional search algorithms. Fashion sellers who understand this gap can move ahead of crowded categories.

How Amazon's Visual Recognition Stack Processes Apparel Images

Here's something most fashion sellers don't know: Rufus does not rely solely on your copy to understand what your product looks like. Testing has confirmed that Rufus actively pulls images into chat responses and uses them as evidence when answering customer questions. The visual pipeline that enables this involves Amazon's own Rekognition service.

Amazon Rekognition — the same AWS computer vision technology available to enterprise clients — is integrated into the product listing infrastructure. It can identify objects, extract text from images, detect colors, recognize clothing categories, and analyze scene composition. When a customer asks Rufus "Is this dress appropriate for a black-tie event?" Rufus isn't guessing from your bullet points alone. It's cross-referencing your visual assets against its understanding of what black-tie formal wear looks like.

This has specific, actionable implications for fashion sellers:

1. Text overlays on images are read literally. If you use an infographic slide with text like "Flowy summer silhouette" or "Stretch fabric — great for travel," Rekognition reads that text and it becomes part of the data Rufus has access to. It's essentially a hidden keyword field inside your image assets.

2. Lifestyle context matters to visual AI, not just to shoppers. A dress photographed at a backyard party communicates occasion context that Rekognition can identify. That context feeds Rufus's ability to answer occasion-based queries accurately.

3. Model demographics and styling choices are visible to the AI. Published user research from a 2025 human-computer interaction study (cited in the References) found that Rufus users flagged frustration when "it got my gender wrong and was trying to show me male clothing at first." This tells us Rufus is attempting gender inference from listing signals — and when those signals are ambiguous or absent, mismatches happen.

Key insight: Your lifestyle images aren't just for human shoppers. Amazon's visual AI is extracting occasion signals, gender cues, styling context, and label text from every image you upload. Optimizing your image deck for AI readability is now a legitimate ranking strategy.

The 5 Subjective Product Needs Amazon Uses to Rank Fashion Listings

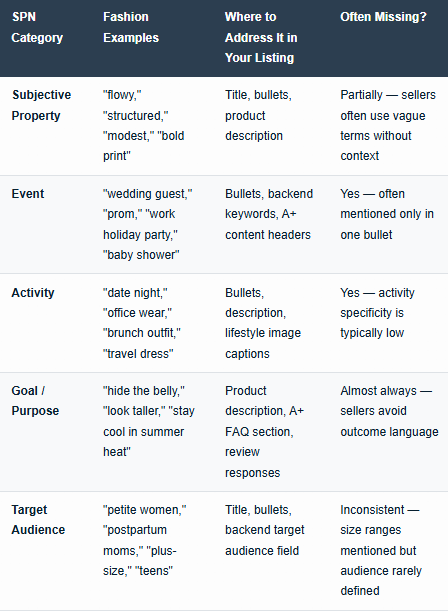

The Amazon WSDM '25 research paper explicitly identifies five "Subjective Product Need" (SPN) categories that Rufus uses to classify and match queries to products. For fashion sellers, each SPN maps to specific content requirements that most listings currently miss.

The classifier was trained on 1,000 queries across five categories (5,000 total labels) and was designed to perform at human-annotator level. This isn't a vague algorithm — it's a structured taxonomy that Rufus applies to your product data to determine recommendation eligibility.

The key observation here: fashion sellers systematically underaddress the Goal/Purpose SPN. The instinct is to describe what the garment is rather than what wearing it achieves for the customer. Rufus's SPN classifier is specifically looking for outcome language — and if it's absent from your listing, you won't surface for intent-based queries even when your product is genuinely the right answer.

Occasion Matching vs. Keyword Matching: A Critical Distinction

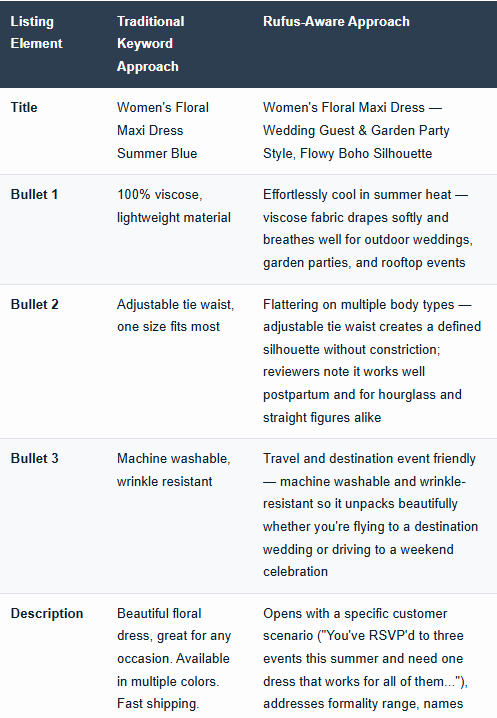

Traditional Amazon search worked on keyword proximity. If a shopper typed "wedding guest dress blue" and your title contained those words, you had a shot at appearing. Rufus works differently — it reasons about intent.

When a customer asks Rufus "What should I wear to a casual outdoor wedding in September?", the AI is not looking for listings that contain the word "September" or the phrase "casual outdoor wedding." It's building a semantic picture of the requirement: relaxed formality, warm-weather-appropriate, perhaps garden party or bohemian aesthetic, comfortable enough for standing and walking on grass.

This means the optimization task for fashion sellers has changed. Your listing needs to answer the questions Rufus is asking internally on the customer's behalf:

What occasions is this garment genuinely suited for?

What is the formality level, and how does it translate to real contexts?

What type of person, body, and lifestyle does this product serve?

What problems does wearing this solve?

The brands getting pulled into Rufus recommendations aren't necessarily the ones with the most keywords. They're the ones whose listings most clearly answer those implicit questions — because that's the data Rufus can extract and match to customer intent.

This connects directly to the pattern we documented in our analysis of how Rufus optimization can backfire on conversion rates — over-indexing for AI recommendation signals at the expense of human readability creates its own problems. Fashion is a category where that tension is especially acute.

Image Optimization That Changes What Rufus Recommends

Given what we know about how Amazon's visual stack reads product images, fashion sellers can take specific steps to improve what the AI extracts from their image decks.

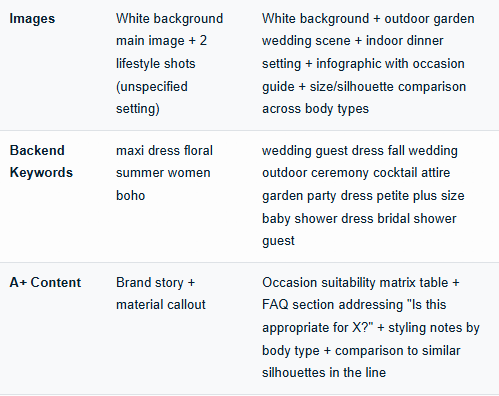

Lifestyle image curation by occasion: Rather than shooting a single lifestyle scene and applying it across all marketing channels, consider creating distinct lifestyle images keyed to your top two or three occasion targets. A dress positioned as both "wedding guest" and "cocktail party appropriate" benefits from separate visual contexts — a garden ceremony scene and an indoor venue scene each communicate different things to visual AI and to human shoppers simultaneously.

Text overlays with semantic intent: Infographic slides are standard in fashion, but most sellers use them for size guides and material specs. That's useful, but it leaves value on the table. An overlay reading "Effortless summer to fall transition — styled for outdoor events and evening dinners" gives Rekognition occasion and activity data in a format it can read directly from the image.

Consistent model styling: The gender mismatch problem documented in user research points to an optimization opportunity. If your fashion product serves a specific gender and audience, every image in your gallery should make that unambiguous. Mixed styling signals create classification confusion for Rufus that shows up as recommendation mismatches.

Alt text as structured data: Amazon processes alt text on A+ content images. This is frequently overlooked in fashion. Writing descriptive alt text that explicitly states the occasion context ("Women in a floral midi dress at an outdoor garden wedding ceremony") creates another data layer Rufus can access. Our coverage of how Amazon Rufus AI reads text in product images goes deeper into the Rekognition layer and what data extraction looks like in practice.

Practical test: Screenshot your main image gallery and look at it without reading any of your listing copy. Ask yourself: what occasions, audiences, and activities does this image set communicate on its own? That's approximately what Amazon's visual AI is extracting. If the answer is vague, your visual optimization has gaps.

Fashion Listing Optimization: Old Approach vs. Rufus-Aware Approach

The Gender Mismatch Problem and What to Do About It

The user research citation deserves its own section because it points to something fashion sellers have little visibility into: Rufus sometimes gets gender wrong, and when it does, it surfaces your product to the wrong audience while potentially suppressing it from the right one.

Published research from a 2025 human-computer interaction study found that Rufus users experienced frustration when the AI inferred the wrong gender from their queries and showed them mismatched products. The researchers noted this as one of the primary "personalization gaps" in Rufus's current architecture.

From an optimization standpoint, this means every ambiguous gender signal in your listing is a liability. Fashion sellers who sell unisex items face the most risk — if Rufus can't confidently classify the target gender, it may avoid recommending the product in gendered queries altogether to reduce the chance of a mismatch. The suppression patterns this creates are subtle and hard to detect without specifically testing Rufus responses to gendered queries about your ASINs.

The fix is explicit audience specificity. Not "fits most adults" but "cut for women's sizing, with particular fit notes for women 5'4" to 5'8" in sizes S–XL." If your product genuinely serves multiple genders, create separate ASINs with distinct visual and copy positioning rather than trying to serve all audiences from one listing.

This connects to a broader suppression pattern we've documented — as detailed in our analysis of the 12 product attributes that trigger Amazon Rufus suppression, ambiguous audience signals are among the most consistent suppression factors across accounts we've reviewed.

The shift toward external AI citation sources is also relevant here — fashion blogs, styling guides, and editorial coverage of your product category are increasingly informing Rufus recommendations independently of your listing content.

Frequently Asked Questions

Does Amazon Rufus actually process fashion product images, or does it only use text?

Testing confirms Rufus pulls images into chat responses. Amazon Rekognition extracts visual data including clothing type, scene context, text overlays, and styling signals. Images are an active data source, not just visual decoration.

What are the most important fashion-specific fields for Rufus optimization?

Occasion keywords in bullets and backend, audience specificity (body type, age, lifestyle), subjective style descriptors ("flowy," "structured"), and activity context (date night, office, travel) — these outperform generic fashion keywords for Rufus matching.

How does Rufus handle occasion-based fashion queries differently from keyword search?

Rufus interprets occasion intent semantically, not through keyword matching. A query like "outfit for casual beach wedding" triggers context reasoning around formality level, setting, and audience — not just a search for those exact words in listings.

Why does Rufus sometimes recommend the wrong gender for fashion products?

Rufus infers gender from listing signals including images, copy, and structured attributes. Ambiguous signals — especially in unisex or gender-neutral listings — cause misclassification. Explicit audience language in copy and unambiguous model photography reduces this risk.

Should fashion sellers prioritize listing copy or images for Rufus visibility?

Both matter but serve different roles. Copy (title, bullets, description) feeds Rufus's text-based semantic matching. Images feed the visual recognition pipeline. Neither alone is sufficient — both need occasion and audience signals present.

What is the 5 SPN framework and how does it apply to fashion listings?

Amazon's WSDM '25 research identified five Subjective Product Need categories: Subjective Property, Event, Activity, Goal/Purpose, and Target Audience. Fashion listings must address all five to qualify for intent-based Rufus recommendations, not just keyword queries.

Does alt text on A+ content images affect Rufus recommendations?

Yes. Amazon processes alt text as structured data. Descriptive alt text that includes occasion and audience context ("women at an outdoor garden wedding") provides another data layer Rufus can extract when building its recommendation set.

How does Rufus score "vagueness" in fashion queries and what triggers clarifying questions?

Amazon's research describes a weighted vagueness score that triggers Rufus to ask follow-up questions when queries lack specific constraints. For fashion, vague queries often prompt Rufus to ask about occasion, body type, or formality level before recommending.

Are reviews important for Rufus fashion optimization?

Yes. Rufus analyzes review content for subjective experience data not found in catalog fields — how fabric feels, how true to size, how it holds up at events. Reviews that mention specific occasions and body fit experiences are particularly valuable signal sources.

Can one fashion ASIN effectively target multiple occasions in Rufus?

Yes, but only if each occasion is explicitly named and supported with visual context. Vague multi-occasion claims ("great for any event") score poorly because Rufus needs specific contextual anchors to match the listing to specific occasion-based queries.

Key Takeaways

Amazon Rufus uses visual recognition (Rekognition) alongside text processing — fashion images communicate occasion, gender, and styling context directly to the AI, not just to human shoppers.

Amazon's 5 SPN framework (Subjective Property, Event, Activity, Goal/Purpose, Target Audience) defines how Rufus classifies fashion queries. Listings that address all five categories are more likely to surface for intent-based searches.

Goal/Purpose is the most consistently missing SPN in fashion listings — describing what wearing the garment achieves for the customer, not just what the garment is.

Occasion-based queries use semantic reasoning, not keyword matching. Listing "wedding guest" once isn't enough — Rufus needs contextual support across copy, images, and structured data to confidently recommend.

The gender mismatch problem is real and measurable — ambiguous gender signals in listing copy and images cause Rufus misclassification that can functionally suppress products from high-intent gendered queries.

Text overlays on infographic slides are read by Rekognition — occasion and audience language embedded in images becomes structured data Rufus can access.

Alt text on A+ content is an underutilized optimization field with measurable impact on Rufus data extraction.

Disclaimer: Observations in this post are based on published Amazon research, documented platform testing, and peer-reviewed academic sources. Amazon does not publicly disclose all aspects of Rufus's ranking logic. Optimization strategies should be tested against your specific catalog and category.

References

Dammu, P.P.S., Alonso, O., & Poblete, B. (2025). A shopping agent for addressing subjective product needs.Proceedings of the 18th ACM International Conference on Web Search and Data Mining (WSDM '25). https://doi.org/10.1145/3701551.3704124

Lu, A. et al. (2025). Human and LLM agent shopping behavior study.ACM CHI '25 Conference on Human Factors in Computing Systems. [Cited for user experience observations on Rufus gender matching and personalization gaps.]

Amazon Web Services. (2024). Going beyond AI assistants: Examples from Amazon.com reinventing industries with generative AI. AWS Machine Learning Blog. https://aws.amazon.com/blogs/machine-learning/going-beyond-ai-assistants-examples-from-amazon-com-reinventing-industries-with-generative-ai/

Amazon Science. (2023). The technology behind Amazon's GenAI-powered shopping assistant Rufus. https://www.amazon.science/blog/the-technology-behind-amazons-genai-powered-shopping-assistant-rufus

Amazon Seller Central. (2025). Product image requirements and best practices. https://sellercentral.amazon.com/gp/help/external/G1881